I’ve written previously about the role of dietary fats in liver disease. I’ve spoken on the subject as well. It’s kind of my “thing”- lipids and liver- so I was kind of excited yesterday when I came across a relatively new paper while browsing PubMed. I thought it was so interesting, and the final points so salient, that it deserved a post… I hope you think so too!

I’ve written before about “Liver Saving Saturated Fats”. By “hits” it’s one of my most popular posts to date, and it’s a good primer to this post, so if you haven’t read it I’d suggest you go back and give it a read. The long and the short of it, however, is that when it comes to alcoholic (and non-alcoholic) fatty liver disease, saturated fat is not the enemy. On the contrary, dietary saturated fats protect against liver disease while fat sources that are rich in polyunsaturated fatty acids (PUFAs), such as corn oil, soy oil, or just about any industrial “vegetable” oil, are closely associated with the development and progression of liver disease.

One of the great papers on this subject (at least in my opinion), was published by Kirpich et al in 2011. In this paper they showed that diets that contain alcohol and are rich in PUFA lead to increased intestinal permeability, increased circulating endotoxin (from gut bacteria), and increased production of inflammatory cytokines [1]. These pathologies aren’t seen in the absence of alcohol, or in the presence of alcohol in the context of a diet high in saturated fat. While I am very fond of the Kirpich paper, I was somewhat frustrated by their choice of dietary fat in the saturated fat group: a mixture of beef tallow and medium chain triglyceride (MCT) oil. The result was a diet that had a high degree of saturation, but consisted of a variety of different kinds of saturated fats.

The problem is, not all saturated fats are created equal.

There are a number of important differences between medium chain fatty acids (MCFA) and long chain fatty acids (LCFA). First is the obvious difference: size. MCFA are between 6 and 12 carbons in length, while LCFA are greater than 12 carbons in length. Shorter fats are easily absorbed across intestinal epithelial cells, and MCFAs rapidly make it to the liver where they are metabolized. On the other hand, long chain fatty acids are absorbed by a longer route, travelling via the lymphatics and making it to the liver in newly formed chylomicrons. Once in the liver, MCFAs are short enough to be directly transported into mitochondria to be used for energy, while LCFA must be “shuttled” into mitochondria via a pathway that requires carnitine and various transferases. These are just some of the basic metabolic differences. Fatty acids are also used by the body for cell signaling purposes- both as second messengers and through modulation of gene transcription and translation- and they’re incorporated into cell membranes. Various dietary fats are handled differently by the body, and it can be difficult to tease out the details with mixed dietary sources and in complex biological systems, but scientists persevere!!

The paper I came across yesterday is a concerted effort to start to tease apart the difference in the effects of dietary MCFA and LCFA in the context of chronic alcohol consumption[2]. Previous papers (discussed in my previous post) have shown that MCFA and LCFA (or frequently a combination of the two) protect against liver injury associated with chronic alcohol consumption, and some have started to understand the mechanisms by which these dietary fats are “liver saving”, but to date I have not seen a paper that specifically tried to look at the differences between MCFA and LCFA in the context of alcoholic liver disease.

The diets:

In order to look at the differences between dietary MCFA and LCFA in the context of chronic alcohol consumption, two experimental diets were used in addition to the traditional control and alcohol “pair fed” diets. The control and traditional alcohol-fed diets relied on corn oil for 30% of calories. Corn oil is approximately 50% PUFA, predominantly the omega-6 linoleic acid. The two treatment groups relied on medium chain triglycerides or cocoa butter (yes, the stuff in chocolate) for 30% of calories. All the fatty acids in MCT have less than 12 carbons (it’s 67% C8:0), while all the fatty acids in cocoa butter have more than 16 carbons (C16:0 and C18:0 are predominant). By creating “saturated fat” diets that were exclusively medium chain or long chain in nature, the researchers were able to draw conclusions on the importance of saturated-fat chain length in liver pathology. As with the alcohol-fed corn oil diet, in the MCT and cocoa butter diets 38% of the calories came from alcohol. All the experiments in this paper were done after 8 weeks of alcohol consumption.

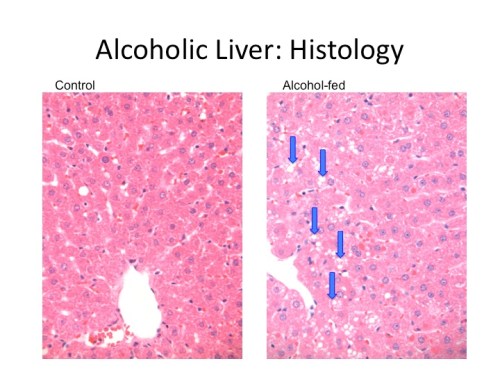

First things first- both MCT and cocoa butter (CB) were able to prevent most of the alcohol induced pathology that was seen in the regular (corn oil) alcohol-fed animals. There was significantly less fat accumulation and none of the inflammatory cell infiltrates that were seen in the corn oil and alcohol-fed animals. The alcohol-fed animals on the corn oil diet also had more hepatic triglycerides, more hepatic cholesterol, and more hepatic free fatty acids.

The liver can be damaged in a number of ways with alcohol consumption, but one significant mechanism relies on the activation of Kuppfer cells (the macrophages of the liver). In rats fed ethanol and corn oil, there was an increase in the number and size of macrophages. There were also increases in inflammatory cytokines that were prevented with MCT and CB feeding.

Previous research has shown that saturated fat consumption prevents an alcohol-induced increase in gut permeability (which allows endotoxin to make it into the circulation where it can lead to the activation of macrophages). This previous research, however, was with a diet that combined medium chain and long chain fatty acids. In the current paper, Zhong et al show that the MCT diet maintains the tight junctions between cells, normalizing serum endotoxin in the face of alcohol consumption. This is not true for the animals fed the CB diet, where there was an increase in circulating endotoxin similar to the alcohol-fed animals on the corn oil diet. However, the amount of endotoxin in the livers of the CB-fed animals were on par with the control and MCT-fed animals, and as mentioned before the levels of inflammatory cytokines were not elevated. This appears to be due to an increase in the protein levels of ASS1, which binds endotoxin, inactivates it, and clears it. Thus it seems that dietary MCTs work in a way that maintains the expression of gut tight junction proteins, preventing endotoxin from making it into the circulation, while long chain saturated fats work in a way that increases endotoxin-binding proteins in the liver. Both prevent endotoxin-induced damage in the liver, but in very different and distinct ways.

So where does this leave us?*

This paper again shows that saturated fats are protective against alcohol-induced liver damage. It digs deeper than past papers, separating out the effects of dietary medium chain fatty acids versus long chain fatty acids. While both medium chain and short chain fats are protective, they appear to be so in very different ways. Dietary MCT prevent alcohol-induced downregulation of tight junction genes in the intestinal eptithelium, preventing endotoxemia and hepatic inflammation. On the other hand, dietary CB normalized hepatic endotoxin concentrations by increasing the amount of an endotoxin-binding protein (ASS1), thus increasing the elimination of endotoxin from the liver and preventing hepatic inflammation.

This raises the question (at least to me), of how much MCT is needed to preserve the integrity of the intestinal epithelium? While preventing inflammatory damage by endotoxin in the liver is an admirable task (well done chocolate!), I’d personally prefer to keep endotoxin out of the circulatory system in the first place. We know from the Kirpich paper that a “saturated fat” diet that is 40% fat using an MCT:beef tallow ratio of 82:18 maintains gut integrity in the face of alcohol consumption and prevents an increase in circulating endotoxin, but how much MCT do you need to maintain gut integrity in the face of an intestinal insult**. This is also important because there are no natural sources of pure (or concentrated) MCTs (at least to my knowledge). Coconut oil is approximately 50% MCTs, predominantly the C12:0 Lauric Acid.

This paper makes good strides in starting to understand how saturated fats of different types protect against the damage done by chronic alcohol consumption. While it may encourage you to have a coconut chocolate with your next glass of wine (oh twist my arm!), I think this paper is also important because if confirms the destructive nature of diets high in polyunsaturated fatty acids. Tis the season for overindulging, and this paper shows that it’s better to over indulge on chocolate and coconut (or steak and eggs), and not on anything bathed in vegetable oils!

Personally I like to get my fats separate from my booze (and with less sugar), but I know some are fans of this seasonal saturated fat/alcohol combo!

* It’s worth noting that this paper also presents data from metabolite profiles in liver and serum samples from the different groups of animals. The data is way over my head (they analyzed 220 metabolites from liver samples and 167 metabolites from serum samples), but I did find it interesting that regardless of dietary fat source, the three alcohol-fed groups were quite distinct from the control group. Additionally, the CB and MCT groups distributed closely, obviously distinct from the alcohol-fed corn oil group.

**Interestingly, that paper also showed that the saturated fat diet caused an increase in the mRNA levels of a number of tight junction proteins in comparison to the control (i.e.- not alcohol-fed) corn oil diet. The current paper showed dietary MCTs capable of maintaining Occludin at control levels, and capable of increasing ZO-1 in comparison to all other groups (control corn oil-fed included).

1. Kirpich, I.A., W. Feng, Y. Wang, Y. Liu, D.F. Barker, S.S. Barve, and C.J. McClain, The type of dietary fat modulates intestinal tight junction integrity, gut permeability, and hepatic toll-like receptor expression in a mouse model of alcoholic liver disease. Alcohol Clin Exp Res, 2012. 36(5): p. 835-46.

2. Zhong, W., Q. Li, G. Xie, X. Sun, X. Tan, W. Jia, and Z. Zhou, Dietary fat sources differentially modulate intestinal barrier and hepatic inflammation in alcohol-induced liver injury in rats. Am J Physiol Gastrointest Liver Physiol, 2013. 305(12): p. G919-32.